Predicting AI Cyberattack Strategic Surprise

A recurring claim among certain experienced cybersecurity practitioners is that AI will not meaningfully change cyber offense. This post will test that claim.

AI Will Change the Cyber Threat Landscape

A recurring claim among certain experienced cybersecurity practitioners is that AI will not meaningfully change cyber offense. They acknowledge shifts in the speed and scale of attacks due to AI, but argue that attack sophistication will not increase; that AI will not execute novel attack techniques. This post will test that claim.

Speed, Scale, Sophistication: Example Evidence

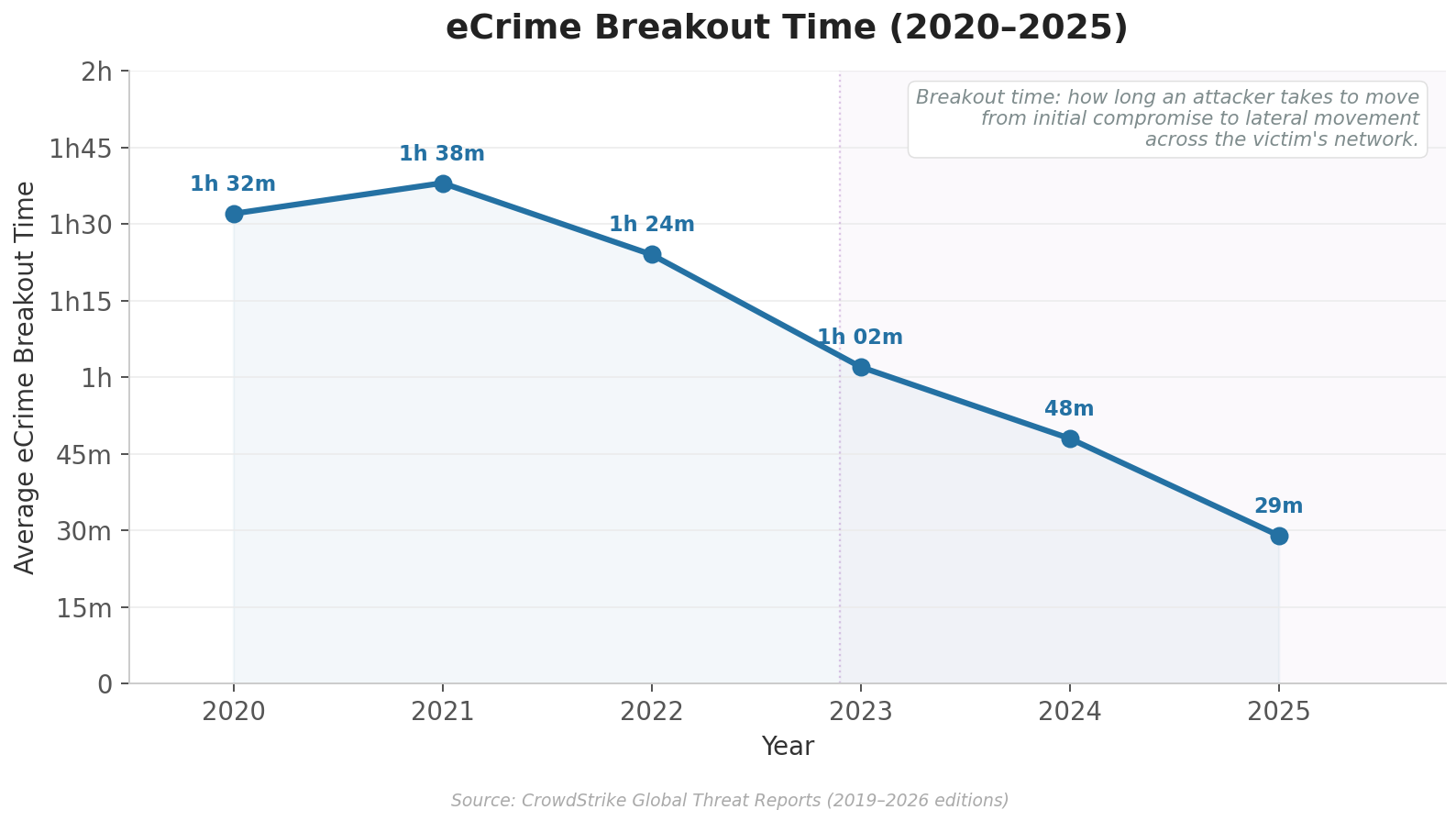

Speed: The time between initial access and full compromise is collapsing. CrowdStrike's 2026 Global Threat Report recorded the fastest eCrime breakout time at 27 seconds, with the average dropping to 29 minutes, which is a 65% increase in speed from 2024. AI will further compress the decision loop between attack steps.

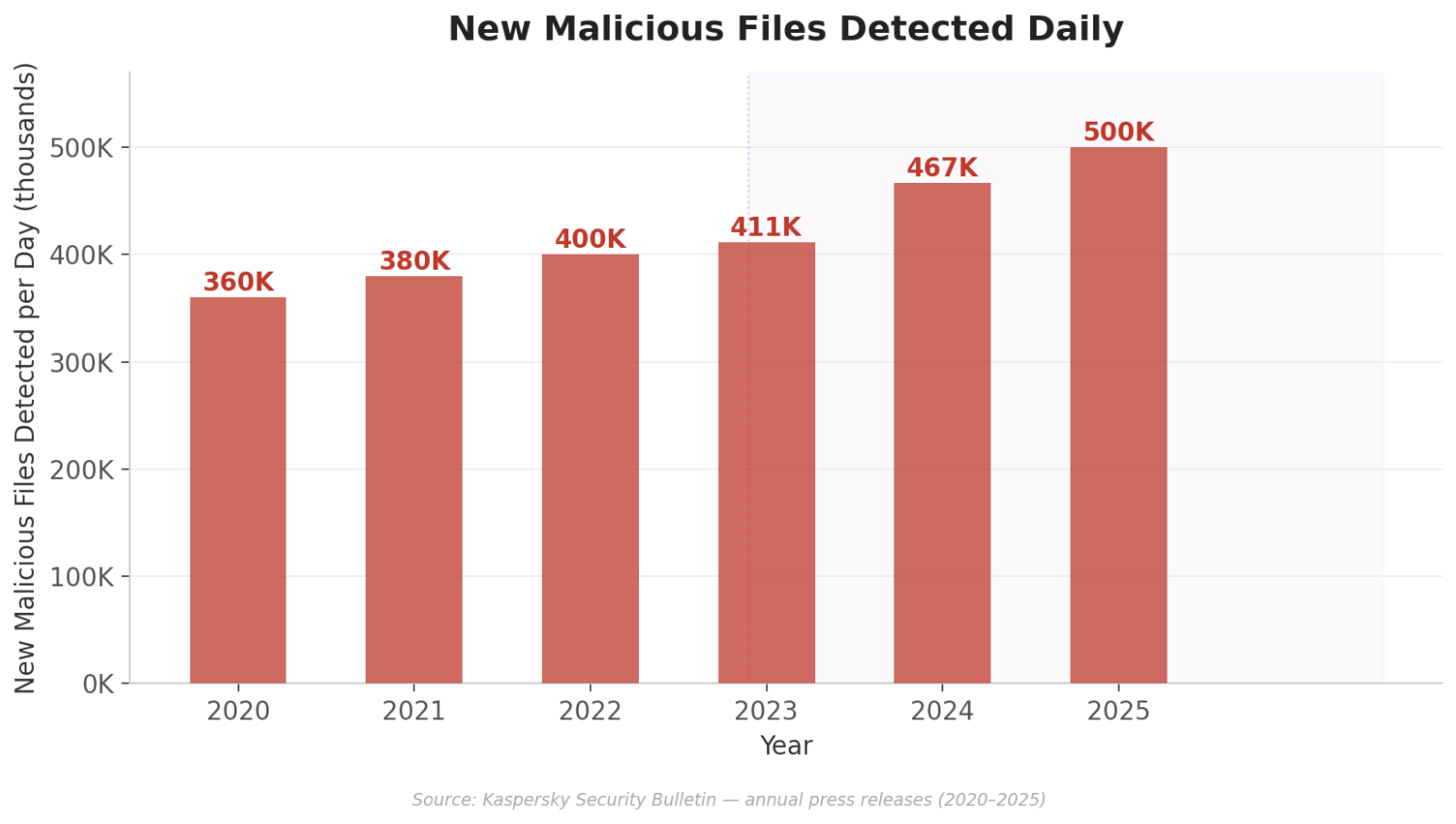

Scale: Everbridge’s 2026 outlook described 2025 as an inflection point where threat actors demonstrated that jailbroken or custom trained models could automate most of the intrusion lifecycle (reconnaissance, exploitation, credential theft, lateral movement, and exfiltration) across many targets at once. Furthermore, the Kaspersky data below on new malicious files discovered daily over the last several years highlights one data point showing how the scale of malicious activity has increased in the LLM era. With the explosion of AI use in offensive operations, the pace of increase will undoubtedly rise further.

Sophistication: This one is more controversial but over the next several years, sophistication could prove to me more significant than speed and scale. There is growing evidence that AI is raising the "sophistication floor" of cyber operations. Novel orchestration capability means that less sophisticated actors now have powerful tools to make them better. Anthropic's November 2025 disclosure of the GTG-1002 campaign showed AI agents automated major portions of the kill chain across roughly 30 targets, with humans only approving key decision points. Though the techniques themselves were not novel, the autonomous orchestration element was.

So the skeptics referenced at the beginning of this post do have a point. So far, individual techniques aren't new and there is little if any publicly available evidence of greater sophistication among the most advanced attackers.

What Does "Novel" Actually Mean?

Definitional ambiguity makes this question hard to answer. I see at least two definitions.

The first is novelty by combination: a new sequence of known techniques and exploits. The individual components are all in the MITRE ATT&CK framework but the attack composition is novel. Much like talented human hackers, AI agents are already doing this but these agents can traverse decision trees of possible attack paths faster and more comprehensively, selecting attack chains that avoid detection logic. An explosion of this activity orchestrated by AIs will certainly feel unprecedented and overwhelming to defenders.

The second is strategic surprise: an attack nobody knew was possible. In 2016, AlphaGo's Move 37 defeated one of the best Go players with a strategy that human experts dismissed as a mistake. Move 37 is evidence that AI can produce strategic surprise. The cyber domain is not exempt from this. Our cyber-physical world is full of weaknesses we don’t see, and I am not merely referring to the endless number of new software vulnerabilities code security agents discover every day. An AI agent exploring this problem space at machine speed, unburdened by assumptions about what constitutes a valid cyber attack path or attack objective, will find attack routes that are currently unknown to us.

How 0Labs is Working to Mitigate Strategic Surprise

In order to defend against the proverbial cyber Move 37, we must predict how agentic hacking will behave.

Our evasion research seeks to move in this predictive direction. 0Labs uses AI agents to conduct real world attack paths against production grade detection stacks, iterating in a feedback loop, studying where detection logic and security control fail. We started with the BlackSuit ransomware chain, a well-documented operation, using TTPs most enterprise detections are built to catch. Here are two interesting examples of hacking agents adapting and finding new paths. And these examples represent just the beginning of what is possible here.

T1078 — Valid Accounts. The agent originally attempted to create a backdoor admin account via net.exe. The standard SIEM detection caught the obvious process chain. The attacking agent then iterated by wrapping the same commands in a WMI call. The execution then flowed through WmiPrvSE.exe and the detection stack failed to catch it. This achieved the same objective with a different execution path. Our 0Labs defensive agent responded by replacing the process chain detection rule with one that detects account creation followed by admin group modification, regardless of how it was launched. This is how we begin to defend against adaptive evasion.

T1486 — Encryption. Instead of dropping a known ransomware binary, the attacking agent, in an attempt to evade defense, used native PowerShell with built-in cryptographic APIs. This is a kind of living off the land. Our 0Labs defensive agent implemented a behavioral detection rule to catch mass file enumeration plus crypto API calls in a short window.

The pattern is the same in both cases: the attacking agent finds the weakness in brittle detection logic, evades, and forces the defensive agent to evolve the rule to catch the evasive behavior.

At 0Labs we are accelerating this feedback loop to predict and prevent the novel attacks that will eventually lead to strategic surprise. This hardens societal defenses against the adaptations AI agents will inevitably use.

AI is already changing the speed and scale of attacks. Sophistication gains, depending on how you define them, are real and will eventually surprise defenders. Genuinely novel attacks haven't been documented in the wild yet, which is exactly why 0Labs is preparing for them now.

Contact Us

Your detection stack needs to be tested against AI adversaries that adapt. 0Labs will run that test for you, a continuous AI-driven evasion against your detection logic, hardening your defense before a real attacker finds the weaknesses.

Contact us to learn more.