Automated Purple Teaming: A Telco Scenario

Agentic purple teaming for the telecoms industry

Purple teaming validates whether your detections actually work against real attack techniques. It is the highest-signal security exercise an organization can run.

The problem is how rarely it happens. It requires specialist talent on both sides, coordination overhead, and engagement windows that compete with operational priorities. Most teams manage it quarterly at best.

Our agents run both sides. Given a threat report, a TTP, or a high-level objective, the red agent interprets the intelligence, plans the attack chain, and executes it autonomously. The blue agent evaluates what the detection stack caught, what it missed, and why. After each run, the red agent analyzes which phases were detected and adapts its tradecraft to evade them in the next iteration.

The output is validated detections, threat hunting queries, and improved detection rules, all tied to real telemetry.

The Scenario

A telecoms company told us they were concerned about covert threats targeting the industry. Their worry was specific: PRC-nexus cyber espionage groups hunting for call detail records. CDR data reveals who called whom, when, for how long, and from which cell tower. This kind of intelligence enables surveillance at scale without ever intercepting a conversation.

Google Threat Intelligence documented this campaign as GRIDTIDE, a global espionage operation by UNC2814 using the Google Sheets API as a command-and-control mechanism. Instructions and exfiltrated data are hidden inside spreadsheet cells, transmitted over legitimate HTTPS traffic to Google infrastructure. From the network's perspective, it looks like a business application talking to a Google service.

Building the Range

We designed a cyber range representing the client's infrastructure and the expected attack path: a simulated telecoms edge network, internal application servers, a database seeded with subscriber records, and a simulated Google Sheets API serving as the C2 endpoint. We deployed out-of-the-box Elastic Security and Elastic Defend, the same default rules shipping in production deployments.

Our red team agent planned and executed the full kill chain based on GRIDTIDE threat intelligence. Initial access through web application exploitation. Credential harvesting from application configuration. Lateral movement via SSH. Persistence through systemd. AES-encrypted C2 over HTTPS to the simulated Sheets API. Exfiltration of CDR data. Cleanup of forensic artifacts.

The full attack chain completed in an hour.

What the Agents Found

Our blue team agents evaluated detection performance across every phase and categorized each finding.

What was detected. Elastic's default ruleset fired on several phases of the attack, confirming that out-of-the-box coverage works for well-known TTPs.

What was not detected. Four phases produced no alerts despite generating telemetry.

Credential harvesting from .env files. Over 63,000 file access events were captured. None alerted. No default rule evaluates access to application configuration files against process lineage or user context. The data was there. The logic wasn't. This is a detection rule gap, addressable with a custom rule correlating file access with process context and a longer-term migration to a secrets manager.

Bash history cleanup. History was cleared on two compromised hosts. No alert fired. A well-documented anti-forensics technique with no corresponding rule enabled. A configuration gap, fixable with a targeted rule and centralized history forwarding.

C2 over HTTPS. The C2 channel operated as a Python process making HTTPS connections to the simulated Sheets API. Endpoint telemetry captured process and network metadata, but the behavior was architecturally indistinguishable from legitimate application traffic. This is not a rule gap. It is a visibility boundary. Endpoint telemetry alone cannot reliably distinguish malicious encrypted C2 from legitimate HTTPS application traffic. Detection here requires network-level controls: behavioral baselining of outbound connections, outbound traffic governance restricting which processes and hosts may reach external APIs, and where feasible, JA3/JA4 fingerprinting to surface anomalous TLS signatures.

Data exfiltration. 58.6KB of AES-encrypted CDR data left the network as chunked HTTPS POSTs over the same C2 channel. Same architectural blind spot. Detection requires the same network-level investment, plus data access monitoring. The act of an unauthorized process querying and staging CDR data is itself a detectable signal upstream of exfiltration.

Telemetry gaps. The C2 and exfiltration findings are not failures of the detection stack. They are boundaries of what endpoint telemetry can see. Closing them requires different data sources entirely.

Detection Engineering

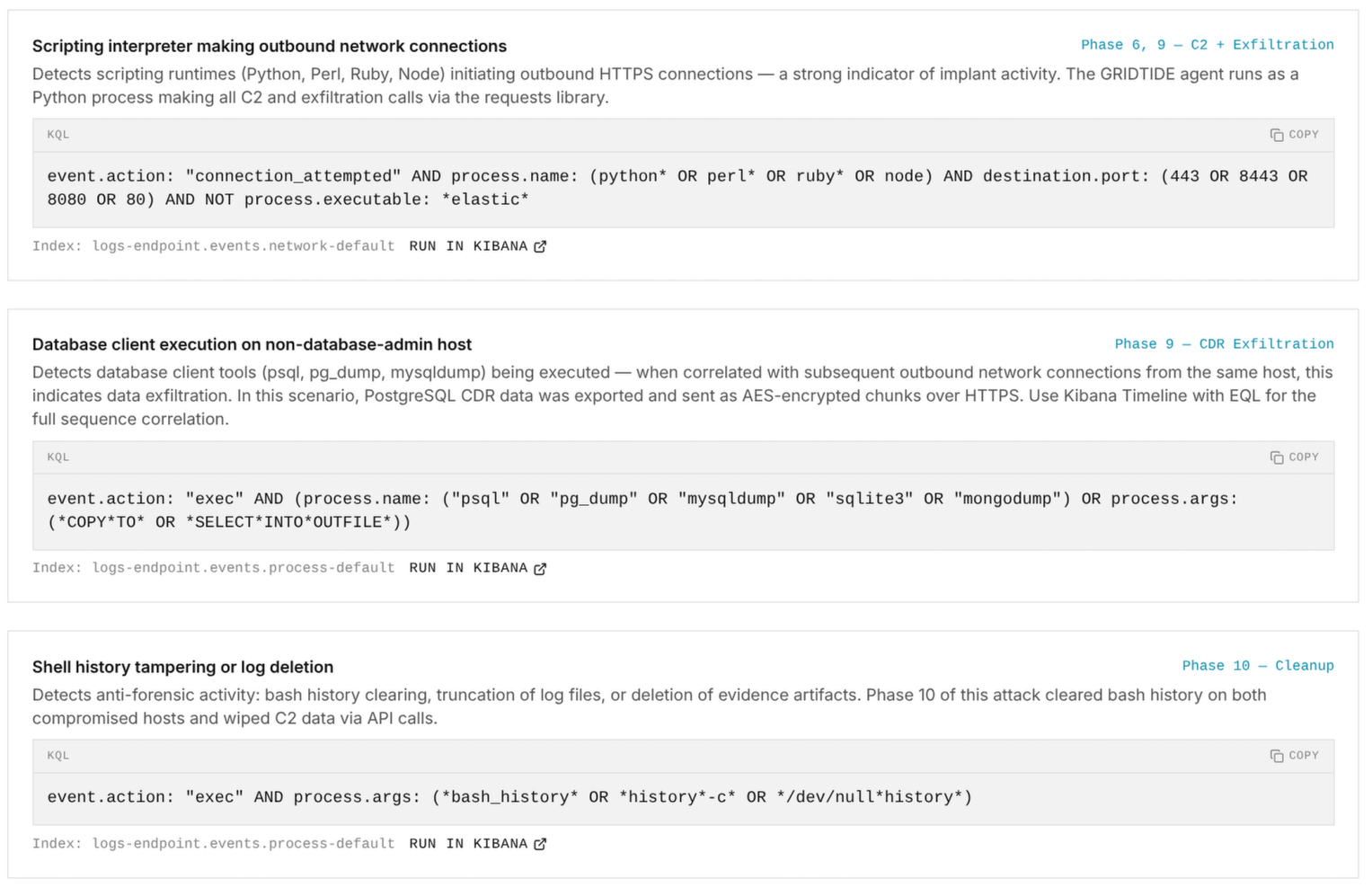

Our agents generated threat hunting queries and detection rules across all phases of the attack. The principle is to detect the tradecraft, not the tool.

Consider how a traditional IOC-based approach would handle GRIDTIDE. You would write individual rules matching the known malware hash, the specific C2 spreadsheet ID, the service account name, the binary path /usr/sbin/xapt, and the attacker's IP ranges. Five rules, each brittle. The moment UNC2814 recompiles the binary, rotates infrastructure, or changes a filename, every one of those rules is dead.

A single behavioral rule replaces all five: alert when a non-package-manager process creates a new systemd service unit, and that service spawns a child process that initiates outbound HTTPS connections to a cloud API endpoint not present in the application's known dependency list. This detects the tradecraft pattern (persistence followed by C2 establishment) regardless of which actor, binary, or infrastructure is behind it. It would catch GRIDTIDE. It would also catch the next campaign that uses the same technique with entirely different IOCs.

Every query we generated was validated against the real telemetry from the simulation, confirmed matches against actual event data in Elastic. With these rules deployed, the techniques that were invisible in the initial run become detectable.

Built to Run Again

This attack took an hour. Our platform is designed to run it again with a different evasion strategy, test whether the new rules hold, and surface the next layer of weaknesses. The agents adapt. They attempt to bypass newly deployed detections and find what still breaks. Each iteration produces tighter coverage.

This scenario ran on our own ranges. We also deploy into sandboxed environments on client infrastructure, running against their actual detection stack, their telemetry, their rule configuration. The closer the environment is to production, the more the results mean.

Every time you simulate an attack, your defense improves.